Clinical research companies can no longer treat AI search as a side topic. It is already changing how buyers discover vendors, compare capabilities, and shortlist partners.

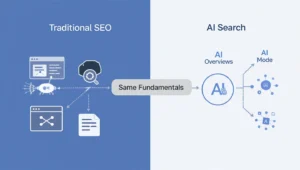

The important point is this: staying visible in AI search is not about gaming a new channel with gimmicks. Google explicitly says there are no special extra requirements for appearing in AI Overviews or AI Mode beyond being eligible for Search and following strong SEO fundamentals. At the same time, Google also makes clear that these AI features use different systems and can pull from a wider set of supporting pages than classic search. In practice, that means clinical research companies need stronger content depth, clearer expertise signals, and better technical hygiene than many of them have today.

1. Stop treating AI visibility as separate from SEO

A lot of teams are already making the wrong move: they are looking for some secret “AI search optimization” tactic.

That is the wrong mindset.

Google’s guidance is blunt. The same foundational SEO best practices still apply to AI features in Search, including AI Overviews and AI Mode. A page must be indexed, eligible to appear in Google Search, and able to show a snippet. There is no hidden AI-only markup or magic switch.

For clinical research companies, that means the first priority is still basic visibility infrastructure:

- pages that can be crawled and indexed,

- clean site architecture,

- clear internal linking,

- proper canonicals,

- and content that actually deserves to rank.

If your site is weak in standard organic search, it will usually be weak in AI search too. That is not an opinion. It is the practical implication of how Google describes these systems.

2. Build content around real buyer questions, not internal service labels

AI search rewards pages that solve complete questions, not pages that just repeat category terms.

Google explains that AI features may use a “query fan-out” approach, meaning the system can expand a user’s question into related subtopics and gather supporting pages from multiple angles before generating a response. That matters a lot for CROs, central labs, clinical technology vendors, patient recruitment firms, and specialist research partners.

A biotech or pharma decision-maker rarely searches in one neat step. They might begin with a question like:

- how to reduce enrollment delays in a rare disease study,

- what to look for in a CNS CRO,

- how to choose a central lab for global trials,

- or what causes protocol amendment delays in oncology studies.

If your website only has generic pages like “Clinical Operations Services” or “Therapeutic Expertise,” you are leaving too much ambiguity for both users and AI systems.

Clinical research companies need topic clusters that map to real decision-stage questions:

- therapeutic area challenges,

- trial phase–specific execution issues,

- country and region operational complexity,

- regulatory and quality expectations,

- vendor selection criteria,

- and cost, speed, and risk tradeoffs.

That is how you become a source page AI systems can cite, summarize, and link to.

3. Prove expertise with original evidence, not generic marketing copy

Google’s people-first content guidance emphasizes original information, substantial coverage, and clear first-hand expertise. It also says trust matters most, especially for topics that can affect health, safety, or well-being. Clinical research clearly sits close to that high-trust territory.

This is where many clinical research websites fall apart.

Too many pages say things like:

“we deliver tailored solutions,”

“we support end-to-end development,”

or “we accelerate innovation.”

That copy is weak in normal SEO and even weaker in AI search.

What works better is original evidence:

- benchmark data,

- enrollment insights,

- protocol deviation patterns,

- startup timelines,

- country feasibility lessons,

- audit-readiness frameworks,

- and lessons from actual study execution.

If a company has first-party knowledge from running trials, building site networks, handling submissions, or managing patient recruitment, that knowledge must be turned into publishable assets. Google specifically recommends original information, research, and analysis as quality signals.

In this space, original evidence is not just a branding advantage. It is the difference between being skimmed over and being treated as a credible source.

4. Make authorship and company identity painfully clear

AI systems do not trust vagueness. Neither do serious buyers.

Clinical research companies should strengthen entity clarity at two levels: the company and the expert behind the content.

Google supports structured data for Organization, Article, and ProfilePage, and recommends using these formats to help Search understand who published the content, who authored it, and how those entities connect. Organization markup can help disambiguate the company itself, while Article markup supports author details, and ProfilePage markup helps define a person or organization profile more clearly.

In practice, that means every serious article should include:

- a real author,

- a credible bio,

- a dedicated author or expert page,

- a publication date and update date,

- and a clear relationship between the expert and the company.

For a clinical research brand, anonymous content is a credibility killer. A page about oncology trial recruitment written by “Marketing Team” is simply weaker than one tied to a named expert with actual operational experience.

5. Strengthen technical clarity so AI systems pick the right page

Bing recently introduced AI Performance reporting in Webmaster Tools, specifically to show how publisher content appears in Microsoft Copilot, AI-generated summaries in Bing, and partner AI experiences. That tells you something important: visibility in AI is increasingly measurable, and the source page selected by the system matters.

Bing has also warned that duplicate or near-duplicate content can dilute authority, blur intent signals, and reduce the likelihood that the preferred page gets surfaced in AI experiences. Clear canonicals, consistent metadata, and consolidated signals matter.

That is especially relevant for clinical research websites, which often generate duplication through:

- near-identical service pages by indication,

- region variants,

- campaign landing pages,

- resource hubs with overlapping topics,

- and copied capability descriptions.

If five pages say basically the same thing about patient recruitment or clinical monitoring, AI systems may struggle to decide which page is the most authoritative answer. Clean consolidation wins.

6. Use structured data where it actually improves understanding

Structured data will not rescue weak content, but it does help search engines understand page meaning and relationships more clearly. Google explicitly recommends relevant markup where it applies, including Organization and Article, and supports a wide library of schema types.

For clinical research companies, useful schema often includes:

- Organization,

- Article or BlogPosting,

- Breadcrumb,

- FAQ where appropriate,

- and in some cases healthcare or study-related schema concepts that clarify medical research context.

Even Schema.org includes types such as MedicalTrial, which reflects the availability of structured vocabularies around clinical study content. That does not mean every site should dump exotic schema everywhere. It means companies should mark up what is true, relevant, and maintainable.

The rule is simple: use schema to reduce ambiguity, not to create noise.

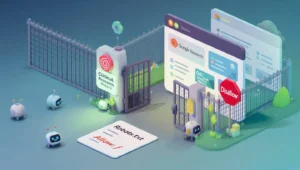

7. Do not block the crawlers that power AI discovery

This is basic, but plenty of companies still get it wrong.

OpenAI states that any public website can appear in ChatGPT search, and advises publishers to make sure they are not blocking OAI-SearchBot if they want their content to be discovered, surfaced, and clearly cited in ChatGPT summaries and snippets. It also notes that publishers can track referral traffic from ChatGPT in analytics.

So if a clinical research company says it wants visibility in AI search, but blocks key crawlers, misuses noindex, or restricts important resources behind technical barriers, that is self-sabotage.

At minimum, marketing and dev teams should audit:

- robots.txt,

- noindex usage,

- JavaScript rendering issues,

- crawl access to resource pages,

- and whether important content is public and indexable.

8. Measure AI visibility as its own reporting layer

Traditional rankings are no longer enough on their own.

Google says AI Overviews and AI Mode can show different supporting links than classic search results. Bing now provides AI Performance reporting. OpenAI allows publishers to be surfaced and cited in ChatGPT search. The obvious conclusion is that search visibility is fragmenting across interfaces, even when the underlying SEO fundamentals remain similar.

Clinical research companies should start tracking:

- branded and non-branded prompts,

- citation frequency in AI experiences,

- landing pages referenced by AI systems,

- referral traffic from AI assistants,

- and gaps between traditional rankings and AI citations.

That reporting layer is now part of modern search marketing.

The bottom line

Clinical research companies do not need an “AI hack.” They need better execution.

The winners in AI search will be the companies that publish the clearest answers, demonstrate real expertise, reduce ambiguity, and make their best knowledge easy for search engines and AI systems to crawl, understand, and cite.

That means:

- technically sound pages,

- topic coverage built around buyer problems,

- original evidence instead of recycled copy,

- visible expert authorship,

- clean entity signals,

- and ongoing measurement across Google, Bing, and ChatGPT-connected discovery.

In this market, vague brochure content is finished. Clinical research companies that want to stay visible in AI search need to become the most credible, most useful source on the questions their buyers actually ask.

Demand Enchance helps clinical research companies move from being found to being quoted.

Book a visibility audit, and we will show you exactly where you appear in AI answers today, where you are missing, and the four-pillar plan to fix it in 90 days.